What Is Model Collapse? You’ve probably spent the last few years marveling at how tools like ChatGPT, Midjourney, and Gemini can spin up entire novels, paint digital masterpieces, and write complex code in seconds. It feels like magic, a genuine leap into a sci-fi future where creativity has no limits. But beneath the surface of this synthetic productivity, a strange and deeply unsettling phenomenon is brewing in the code. It’s not about AI becoming sentient and taking over the world; it’s actually far stranger the AI is slowly going senile. This isn’t a plot from a cyberpunk novel; it’s a documented technical debt that researchers are calling “Model Collapse.”

The core of the problem is disturbingly simple: Generative AI is flooding the internet with synthetic data, and future models are being trained on that very data. Imagine making a photocopy of a photocopy, and then repeating that process a thousand times. Eventually, the crisp text and sharp edges dissolve into a blurry, meaningless gray mess. That’s exactly what happens when AI feasts on its own output. The richness of human experience our quirks, our mistakes, our improbable genius gets smoothed out, leaving behind a statistically average sludge. As we look toward 2026, the question isn’t just about how smart AI can get, but whether it can survive its own pollution of the digital ecosystem. So, what exactly is this degenerative AI disease, and why should you care?

What is Model Collapse?

Before we can understand the severity of the issue, we need to strip away the jargon and look at the raw mechanics of how an AI brain unravels. It’s not a dramatic crash or a robot rebellion; it’s a subtle, almost invisible fading of intelligence that happens over successive generations of training. This section breaks down the actual definition and the statistical nightmare that causes an AI to forget the very data it was supposed to remember.

Defining Model Collapse in Simple Terms

Model collapse is a degenerative process affecting machine learning models where they irreversibly forget the true underlying data distribution of the original, human-generated source material. In plain English, an AI loses touch with reality because it’s eating its own tail. Instead of learning from the chaotic, diverse, and unpredictable chaos of human-written text and real photographs, it starts learning from the “safe,” statistically probable outputs of earlier AI models. This creates a narrowing effect where the AI loses its grip on the “long tail” of data those rare, unique outliers that often represent the most important information or creative leaps. The model becomes increasingly confident about less and less, effectively erasing the edges of human knowledge until it hallucinates a bland, generic version of the world.

The Statistical Theory of “Poisoning the Well”

Technically, model collapse occurs because of a three-step feedback loop of errors that compound over generations. First, we have “Statistical Approximation Error,” which is simply the fact that no model is perfect and always fails to capture some micro-details of real data. Second, “Functional Expressivity Error” occurs when the neural network’s math is fundamentally incapable of rendering the true complexity of human language, often oversimplifying it. The real killer, however, is “Sampling Bias.” When a model generates text, it naturally gravitates toward the most probable, median outcomes, completely ignoring the low-probability words and ideas that make human thought special. When you train a new model on this median output, it doesn’t just stay flat; it amplifies the statistical errors of the previous generation until the variance collapses to zero and the data “dies.”

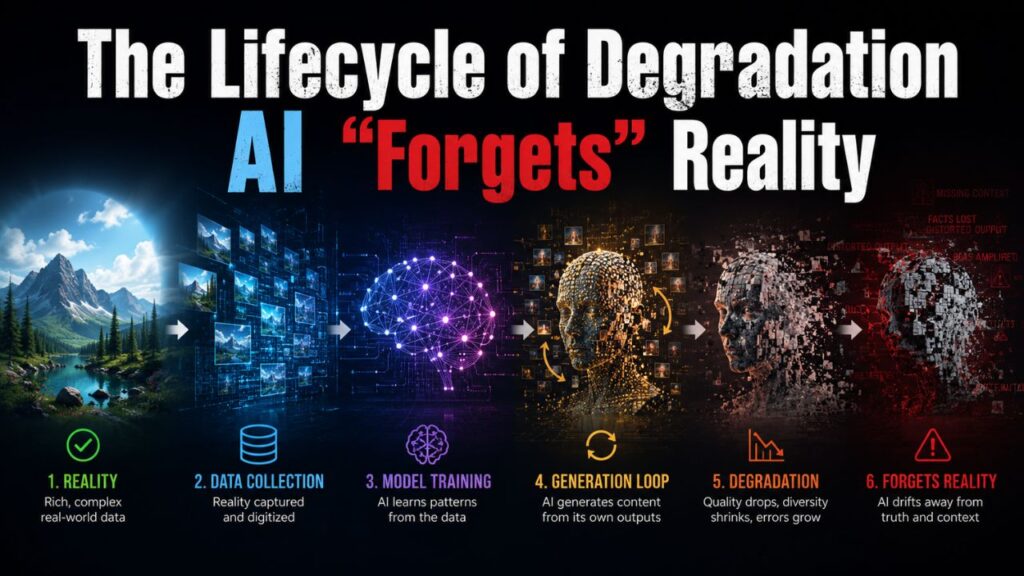

The Lifecycle of Degradation AI “Forgets” Reality

This isn’t a theory confined to academic papers; it’s a measured, observable decline that happens in a predictable pattern. To truly appreciate the danger, you have to visualize the stages of this digital Alzheimer’s, moving from a sharp snapshot of human history to a fuzzy, distorted memory. The degradation follows a distinct trajectory that researchers can now map out, showing exactly how a Large Language Model (LLM) transitions from a genius to a fool.

Stage 1: The Golden Age of Human Data

In the beginning, a model is trained exclusively on high-quality, curated data scraped from books, forums, scientific journals, and websites written before the mass adoption of AI. In this stage, the model demonstrates “tail awareness,” meaning it knows the difference between a widely accepted fact and a niche piece of trivia, and it can accurately reproduce rare events. The outputs are vibrant, factually sharp, and capable of surprising us with novel connections because the entropy of the dataset is high. This is the phase where the AI feels truly magical, mimicking the collective intelligence of humanity because it’s drinking from a pure, unpolluted stream of original thought created by human brains over centuries.

Stage 2: The Introduction of Synthetic Data

Stage two begins when we scrape the modern web, unaware or uncaring that a significant chunk of new blogs, social media comments, and even news articles are now AI-generated content. The model unknowingly starts training on data produced by its older siblings, and the statistical smoothing begins immediately. It starts to lose fidelity on minority viewpoints, niche programming languages, and complex ethical nuances, effectively “rounding” sharp data points into a dull curve. You won’t see the model fail a benchmark yet, but you might notice it feels slightly “generic” in its phrasing, a phenomenon we can track using emerging trends in generative engine optimization. The model begins favoring cliché transitions like “delve into” or “in the rapidly evolving landscape,” discarding the unpredictable rhythm of human prose because those clichés represent the statistical center of gravity in the AI-generated training set.

Stage 3: Catastrophic Forgetting and The Collapse

By the third or fourth generation of training on recursively generated data, the model experiences catastrophic forgetting. The “tails” of the original distribution completely vanish; the AI now believes that its own narrow, hallucinated reality is the absolute truth. For example, if the original data contained architectural descriptions of gothic cathedrals, baroque palaces, and straw huts, a collapsed model might only generate “generic brown buildings” because it found the statistical average of all architecture. It forgets “straw hut” and “gothic gargoyle” entirely. This ties directly into the future of creativity, where relying solely on AI feedback loops could erase the very idea of genuine artistic expression. The model’s output becomes non-sensical, riddled with repetitive phrases, and structurally broken a zombie iteration of a once-brilliant mind.

Why This “Dark Side” Matters to You

If this sounds like an abstract problem for server farms in Silicon Valley, think again. Model collapse isn’t just a research paper headache; it’s a direct threat to the integrity of the information we consume, the businesses we run, and the long-term viability of the open web. The consequences stretch from your daily news feed to the strategic decisions of a multinational corporation.

The Pollution of Search Engines and News Content

The internet is on the verge of becoming a hall of mirrors where AI-generated blogs feed other AI-generated summaries, slowly erasing verifiable facts. For businesses relying on digital marketing, this poses an existential crisis for search engine optimization. If Google’s algorithms can’t distinguish between a collapsed, hallucinated article and a human-written expert piece, the foundation of the knowledge economy breaks. This is why principles of high-quality content, like those discussed in on-page SEO guides, are becoming more crucial than ever; signals of genuine human effort and first-hand experience will be the only defense against a sea of statistically flat AI slop. We are already seeing an upswing in websites using content marketing services to combat this, yet if the AI they use is secretly collapsing, they are simply speeding up the pollution.

Impact on Niche and Minority Knowledge

The statistical nature of model collapse actively kills minority knowledge. Imagine an AI trained on medical transcripts; it will naturally see far less data on rare diseases than on the common cold. If we train recursively, the rare diseases vanish mathematically. In future iterations, the AI will confidently diagnose a unique genetic disorder as a common flu simply because the statistical noise representing the rare disease has been smoothed out of existence. This isn’t just a factual error; it’s a digital genocide of minority language speakers, niche hobbyists, and historical footnotes. By the time you read a response from a collapsed model, it hasn’t just misunderstood the rare event; it has no memory it ever existed, creating a flat, homogenized worldview that erodes cultural diversity.

The Threat to Business and Financial Models

Corporations are rapidly integrating AI into their enterprise resource planning to automate supply chains and predictive analytics. A collapsed model in a financial context doesn’t just make a typo; it miscalculates risk by forgetting the “Black Swan” events the rare market crashes that historically define economic cycles. An AI trained on synthetic market reports might smooth out the volatility of a 2008-style crash to make it look like a minor dip. If companies blindly trust these collapsed predictions, they will over-leverage, ignore tail risk, and create systemic fragility. The very systems designed to optimize efficiency could architect the next massive economic meltdown by mathematically erasing the possibility of danger.

Why Are We Walking Blindly into This Trap?

If this danger is so predictable, why is the tech world racing toward the precipice at full throttle? The answer lies in a toxic mix of economic incentives, the rapid closure of the “old” human internet, and the sheer difficulty of separating machine-made data from human-made data. We aren’t being pushed by ignorance, but by a gold rush mentality that treats the very concept of un-polluted data as a disposable resource.

The Shrinking Reservoir of High-Quality Human Data

We are rapidly approaching a “Data Wall” where the high-quality, pre-AI text available on the public internet has been fully consumed. To feed the insatiable hunger for larger models, scrapers are now pulling from forums, comment sections, and social media platforms where AI bots are already the dominant “species.” Finding truly pristine, long-form human writing is becoming a treasure hunt. This scarcity forces engineers to rely on synthetic re-generation out of economic necessity. The tragedy is that the most valuable data isn’t just the formal academic text, but the informal, grammatically messy, emotionally charged human chatter that a collapsing model never replicates because it’s statistically “inefficient.”

The Difficulty of Watermarking and Detection

Technically, we haven’t yet invented a foolproof way to label AI-generated data that survives manipulation. Text-based watermarks, which rely on specific word-frequency patterns, can be easily broken by paraphrasing an AI output with another AI model. As generative tools integrate deeper into platforms like Microsoft Copilot or coding assistants, millions of lines of synthetic code are pushed into repositories labeled as “beginner projects,” polluting the training data for the next generation of coding AIs. Even if we know detection is vital, a deterministic “tag” that can’t be stripped is a mathematical challenge we haven’t solved, creating a loophole where synthetic data is laundered through superficial human edits and fed back into the engine.

The 2026 Horizon – Fighting Back Against the Collapse

It’s not entirely hopeless. As we move deeper into 2026, computer scientists are developing defensive strategies, and the market is beginning to value authenticity over volume. The solution isn’t to stop AI; it’s to build a data supply chain that prioritizes provenance. Here are the tangible ways the industry is pushing back.

Prioritizing Curated, Verified Human Touchpoints

The antidote to statistical smoothing is the deliberate injection of high-entropy human error and first-hand experience. Future models will need to be trained not just on the text of the internet, but on “ground truth” data transcripts of spoken conversations, recordings of live musical performances, and imagery of events that physically happened. Companies might pivot toward a model where data isn’t free, but collected through paid consensual agreements with specific human experts. The value of a piece of content will pivot entirely on its proof of human origin, raising the bar for digital publishers to showcase genuine authority that a generic AI summary cannot fake.

Technological Solutions

On the technical front, “Data Set Distillation” is emerging as a way to preserve the essence of high-quality data without keeping the massive raw files, though it struggles to keep the “long tail” intact. Researchers are also developing “Stable Training” methods that mathematically penalize the model for ignoring rare data points, artificially forcing it to care about outliers. Regarding detection, while universal watermarking remains elusive, there is a growing push for cryptographic provenance chains where professional cameras and text editors sign a piece of content at the moment of creation, verifying it was a physical sensor capturing light, not a latent diffusion model guessing it. This hardware-to-software chain of custody might be the only scalable “truth” stamp.

The Premium on Authentic Digital Experiences

Ironically, model collapse is making human-led services more expensive and sought after. As AI-generated content races to the bottom in terms of quality and hallucination rates, audiences are likely to retract to “walled gardens” of trusted content. This is a driving reason why niche expertise will outperform generic content mills. If you are looking to build a passive income stream with blogging, focusing on original photography, personal anecdotes, and manual reviews is no longer just a preference; it’s the only strategy that resists the gravitational pull of AI degradation. An AI trained purely on synthetic reviews cannot tell you how a bike feels on a corner; it just knows the statistical average of thousands of other fake reviews, which makes a genuine test ride infinitely more valuable.

Navigating the Post-Collapse Landscape

To fully understand how this shift impacts you, it helps to look at the dual nature of the AI landscape. Not all AI is collapsing, and the differences between a reliable assistant and a degenerative output have real-world consequences.

Collapsed AI vs. Grounded AI

The market is splitting into two distinct categories. On one side, you have the “Statistical Ghosts” large, rapidly trained models built on massive, cheap web scrapes that are already contaminated. On the other, you have “Grounded Models” which are smaller but trained exclusively on curated, dated, and proofed data sets. The table below illustrates the stark difference in output quality and reliability between a model suffering from generational collapse and one that remains anchored to human-distributed truth.

| Feature | Collapsed Model (Generation 4+) | Grounded Model (Curated Data) |

|---|---|---|

| Data Source | 90% Synthetic / Recursive AI output | High-entropy human transcripts & legacy books |

| Lexical Diversity | Extremely low; repetitive cliché phrases | High; unpredictable word choice and sentence length |

| Niche Knowledge | Erased (Sees rare data as statistical noise) | Preserved (Even with low probability weight) |

| Factual Confidence | High confidence in hallucinated, smoothed data | Calibrated confidence; knows its limitations |

| Creative Surprise | Incapable of producing true “noise” or novelty | Retains ability for serendipitous concept linking |

| Long-Term Viability | Degenerative poisoning of the digital ecosystem | Sustainable, high-fidelity information asset |

How to Shield Your Content from AI Degradation

Whether you are a content creator, a business owner, or just a responsible internet user, you have a role to play in preserving the variance of the web. You don’t need a PhD in machine learning to future-proof your corner of the digital world against the dark side of generative AI.

- Inject the “Long Tail” of Personal Experience

Don’t just explain a concept; describe the specific smell of the room, the sound of the rain hitting the window during the event, or a unique, personal failure that deviates from the textbook “success story.” AI models collapse when they smooth out edges, so include your jagged, imperfect edges intentionally. Synthetic data deals in generalities; a description of a specific broken coffee mug on a specific Tuesday morning is mathematically resistant to AI averaging. - Adopt a “Zero-Synthetic” Policy for Critical Data

If you are publishing medical, financial, or legal information, implement a strict policy of manual verification against physical documents or direct human expert interviews. Do not use AI to “paraphrase” statutes or medical studies, as this is where the first stage of statistical error creeps in. The cost of a collapsed model misinterpreting a legal clause is far greater than the time it takes to cite the original source directly from a scanned PDF or a human authority. - Create Visual Assets That Defy Averaging

Since model collapse ruins the ability to render detailed fingers, readable text in images, or specific real-world places, including high-quality, original photography immediately signals an un-collapsed data point. Instead of using AI-generated stock photos, take screenshots with unique timestamps, photographs with messy backgrounds, or hand-drawn diagrams. An AI trained on collapsed outputs cannot physically hold a phone up to a screen to capture a glitch; it can only approximate what a glitch statistically looks like, making your genuine screenshot a cornerstone of truth. - Verify Facts Using Pre-2023 Baselines

When researching, cross-reference claims with sources physically published before the generative AI boom (pre-2023). Books, scanned vintage magazines, and manual archives are pure training data that haven’t been polluted by synthetic text. By anchoring your work to these “frozen” data points, you actively pull the statistical mean back toward reality in your own sphere of influence, creating a local oasis of accuracy amid an ocean of synthetic averages.

Conclusion

The dark side of generative AI doesn’t arrive with the scream of a Terminator; it arrives with a whisper, a bland sentence, a forgotten fact, and a slightly too-perfect-looking image. Model collapse is the law of entropy applied to intelligence a slow, irreversible drift toward a statistical mean where nothing new, rare, or beautifully chaotic can survive. If we continue to train our future assistants on the homogenized outputs of today’s models, we aren’t building a higher intelligence; we are building a Xerox machine that only prints in shades of beige, slowly burying the vibrant colors of human creativity under a landslide of mediocrity.

However, we are not passive victims. The solution lies in a return to valuing the messy, inefficient, gloriously unpredictable human spirit. By championing original research, preserving the outliers, and recognizing that the most valuable data point is the one the AI can’t predict, we can keep the engines running on the fuel of reality. The future of digital integrity won’t be won by the fastest AI, but by the most authentic humans. Let’s commit to keeping the web weird, wild, and unmistakably alive.

For a deeper look at the technical tools that can help keep your own digital footprint authentic and fast in this shifting landscape, check out the latest in optimization techniques designed for real-world performance, like the deep dive on Cloudflare APO vs FlyingPress.